Year of 101s, Part 1 – Node January

Summary – What was it all about?

I set out to spend January learning some node development fundementals.

Part #1 – Intro

I started with a basic intro to using node – a Hello World – which covered what node.js is, how to create the most basic of all programs, and mentioned some of the development environments.

Part #2 – Serving web content

Second was creating a very simple node web server, which covered using nodemon to develop your node app, the concept of exports, basic request routing, and serving various content types.

Part #3 – A basic API

Next was a simple API implementation, where I proxy calls to the Asos API, return a remapped subset of the data returned, reworked the routing creating basic search functionality and a detail page, and touched on being able to pass in command line arguements.

Part #4 – Basic deployment and hosting with Appharbor, Azure, and Heroku

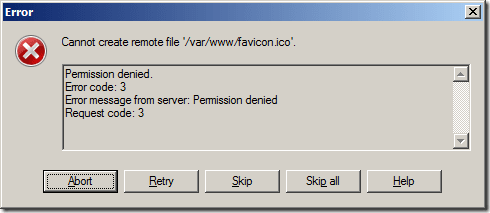

Possibly the most interesting and fun post for me to work on involved deploying the node code on to three cloud hosting solutions where I discovered the oddities each provider has, various solutions to the problems this raises, as well as some debugging cleverness (nice work, Heroku!). The simplicity of a git-remote-push-deploy process is incredible, and really makes quick application development and hosting even more enjoyable!

Part #5 – Packages

Another interesting one was getting to play with node packages, the node package manager (npm), the express web framework, jade templating engine, and stylus css pre-processor, and deploying node apps with packages to cloud hosting.

Part #6 – Web-based development

The final part covered the fantastic Cloud9IDE, including a (very) basic intro to github, and how Cloud9 can still be used in developing and deploying directly to Azure, Appharbor, or Heroku.

What did I get out of it?

I really got into githubbing and OSSing, and really had to try hard to not over stretch myself as I had starting forking repos to try and make a few tweaks to things whilst working on the node month.

It has been extremely inspiring and has opened up so many other random tangents for me to explore in other projects at some other time. Very motivating stuff.

I’ve now got a month of half decent blog posts – I had only intended to do a total of 4 posts but including this one I’ve done 7, since I kept adding more information as it turned up and needed to split a few posts into two.

Also I’ve learned a bit about blogging; trying to do posts well in advance allowed me to build up the details once I’d discovered more whilst working on subsequent posts. For example, how Appharbor and Azure initially track master – but can be configured to track different branches. Also, debugging with Heroku only came up whilst working with packages in Heroku.

Link list

Node tutorials and references

Setting up a node development environment on Windows

Node Beginner – a great article, and I’ve also bought the associated eBooks.

nodejs.org – the official node site, the only place to go for reference

Understanding Javascript better

Execution in The Kingdom of Nouns –

Object Orientation and Inheritance in Javascript

Appharbor

Appharbor and git

Heroku

Heroku toolbelt download and reference

node on Heroku

Azure

Checkout what Azure can do!

February – coming up, Samsung Smart TV App Development!

Yeah, seriously. How random is that?.. 🙂