The rise of the conversational interface shows no signs of slowing down; chatbots are the new apps, Siri is getting old already, and although it’s still awkward to say “Ok Google” at your watch or “hey cortana” to your phone, somehow we’re happy to ask “Alexa” for the news, weather, or to play something by Bruno Mars.

The Amazon Echo looks like the first generation of a socially acceptable, almost natural, voice controlled conversational interface.

It’s that first step towards the Star Trek computer; you can’t quite say “Alexa, locate Commander Data” (although you can ask her to beam you up, and for earl grey tea, hot) but you can get a decent answer to “Alexa, where is my phone?” (assuming you’ve installed the relevant app).

All of the tutorials out there for developing your own Alexa Skill require a lot of digging around on Amazon Web Services, learning some nodejs*, and getting knee deep in lambdas (Amazon’s Functions as a Service/Server less architecture solution).

In this article I’ll show you how to easily understand how to develop your own Alexa Skill with just your laptop and a json file

Getting Started

The first thing we need to do is go through the Alexa Developer Portal to set up our new skill.

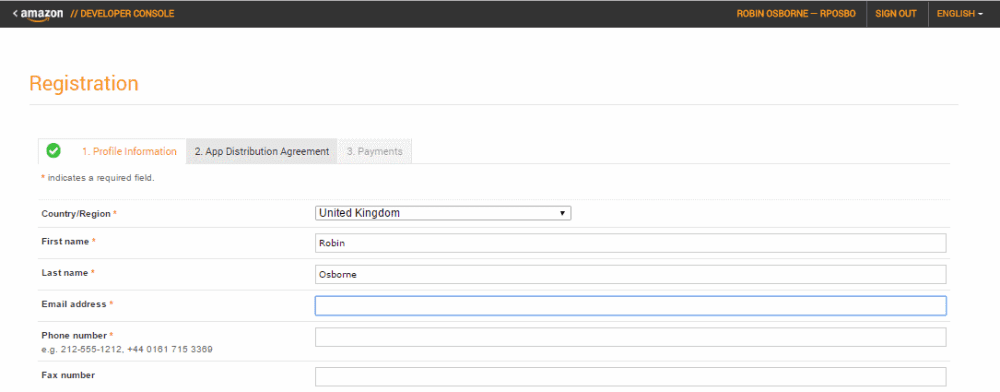

Head over to developer.amazon.com and sign up.

If you’ve not been here before you’ll be asked to fill in a few screens of information including some terms and conditions and say whether you plan to charge people to use your skill.

Now that you’re on the developer portal, tap on “Alexa” in the top menu:

Then “Alexa Skills Kit – Get Started”

Now hit Add a New Skill

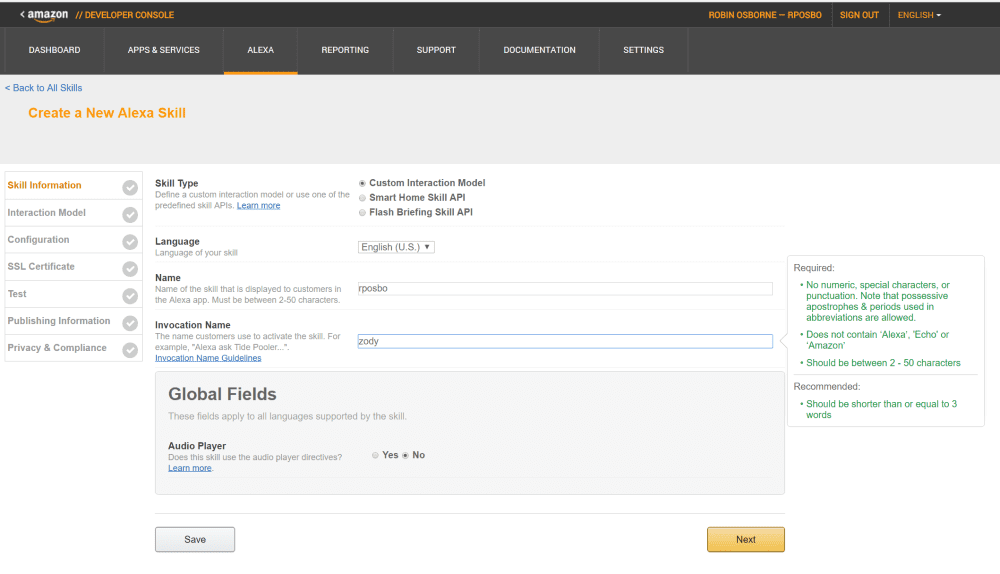

Skill Information

Give your new skill a name and a key word. The key word – also called the invocation word or term – is what Alexa listens out for in order to trigger your skill; for example “Spotify” or “Uber”. Or “Jeff”.

It helps to think of a name and key word that are relevant to your skill.

I’m going to build a Chinese Zodiac skill, so I’ll use the key word “Zody”; that way when someone wants to invoke it they can say “Alexa, ask Zody…”

Notice there are restrictions on the invocation word:

Required:

• No numeric, special characters, or

punctuation. Note that possessive

apostrophes & periods used in

abbreviations are allowed.

• Does not contain ‘Alexa’, ‘Echo’ or

‘Amazon’

• Should be between 2 – 50 characters

Recommended:

• Should be shorter than or equal to 3

words

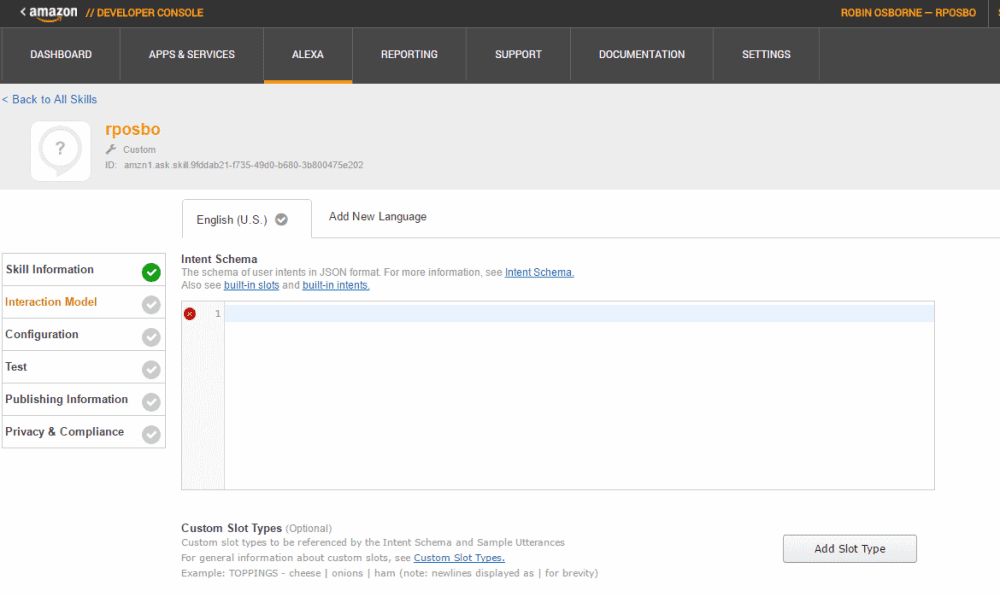

Skill Interaction Model

Intents and Slots

If you’ve not developed chatbots before these terms might be unfamiliar.

An intent is essentially the underlying method or function that you want to call.

A slot or entity is a variable or parameter value that will be passed to that function.

If someone asked Alexa “what’s the weather like in London tomorrow”, the slots could be “place” with a value of “London” and “time” with a value of “tomorrow”.

Enter your intent structure in json format like this:

{

"intents": [

{

"intent": "GetZodiacAnimal",

"slots": [

{

"name": "Year",

"type": "AMAZON.FOUR_DIGIT_NUMBER"

}

]

}

]

}You can see that intents is an array of intent and slots is an array of name and type pairs. In my json above I’ve defined one intent – GetZodiacAnimal – and one variable – Year.

The slot type can be either one of the predefined Amazon types, such as AMAZON.FOUR_DIGIT_NUMBER, or one of your own choosing – a custom type.

Custom Slot Type

For example, I could define a type called LIST_OF_ ANIMALS and configure it with a set of possible values such as:

Rat

Ox

Tiger

DragonThis is a great way to define a small set of possible values that you want to pick up.

Utterances

Now we need to set up the skill with possible phrases that would fire off our intents.

Utterances are mappings between an intent and possible phrases that could map to the intent.

For example, the phrase “what’s the weather like in London tomorrow” could map to an intent “GetWeatherForecast”. Our consuming backend code would then pick up the intent from the Alexa response and fire the appropriate method.

The format for defining an utterance for your Alexa Skill is:

Intent phrase {slot}Anywhere that you might have a variable, replace this with the name of the appropriate slot. For the “weather” example this could be:

GetWeatherForecast what's the weather like in {place} {time}The utterances are case sensitive; make sure your slots and intents here match the cases used in your intent json, above.

The example utterances for my Chinese Zodiac skill are:

GetZodiacAnimal give me the animal for {Year}

GetZodiacAnimal what was the zodiac for {Year}

GetZodiacAnimal in {Year} what was the Chinese animal

GetZodiacAnimal what chinese animal was it for {Year}That should do for intents and utterances.

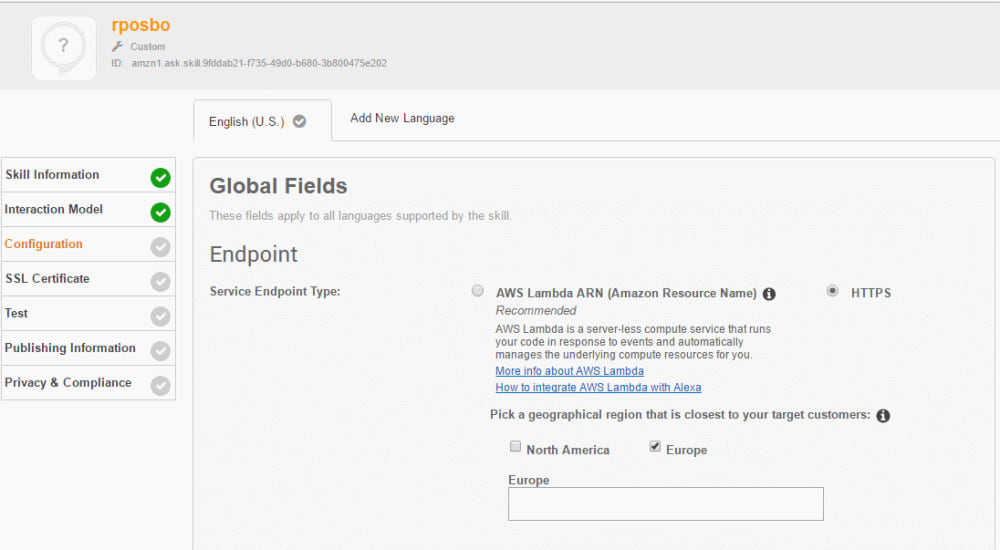

Endpoint Configuration

At this point almost every other Alexa tutorial will point you towards setting up an AWS account and copy pasting some nodejs into a lambda, then configuring that lambda to fire based on an Alexa event.

Sounds like work. Let’s not do that. Instead, choose the HTTPS option.

At this point we have to enter an HTTPS endpoint, so let’s create one, the easy way.

First select your geographical region (where you expect most of your users to be); I’m guessing this defines which data centre your skill is deployed to, perhaps.

I’m selecting Europe.

ngrok

Ngrok is an incredible piece of software that creates a secure tunnel through the internets to localhost. It’s nuts.

I introduced it in detail in a previous article on debugging botframework, so I’ll not go into too much detail here.

Head over to ngrok.com and download the executable. Open a command prompt in the same directory and fire off this command:

ngrok.exe http 8080This will cause ngrok to start sharing your local port 8080 over HTTP and HTTPS with a unique URL.

In a few seconds you should see your ngrok URL appear; copy that and paste it into the Alexa Skill “endpoint”

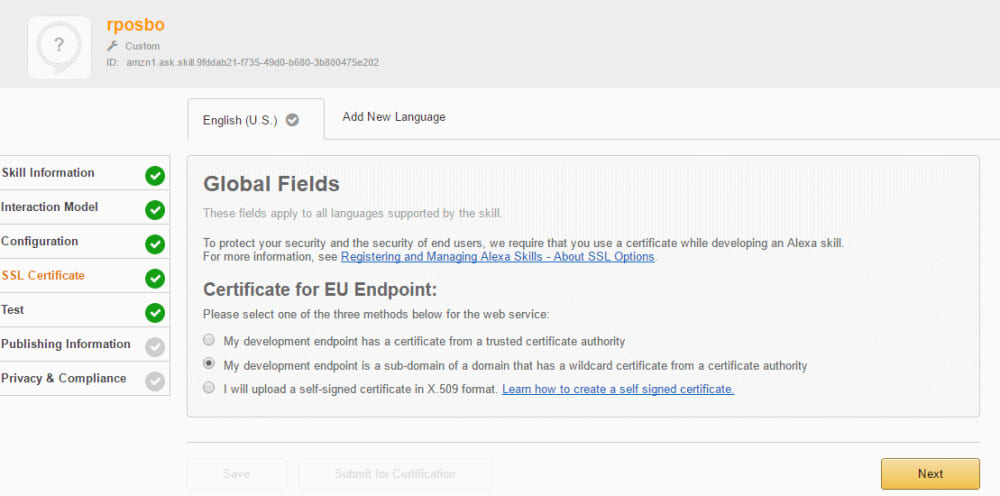

SSL Certificate

Since you’re using ngrok just select the “my development endpoint is a subdomain…” option.

Test

Now we get to test our non existent skill! This screen is great fun thanks to the Voice Simulator; try entering a phrase and see how it sounds. Subtle punctuation can make a big difference; try both of these to hear what I mean:

“The Chinese Zodiac animal for 1978 is horse”

and

“The Chinese Zodiac animal for 1978, is horse”

That comma makes it sound much more human, in my opinion.

Service Simulator

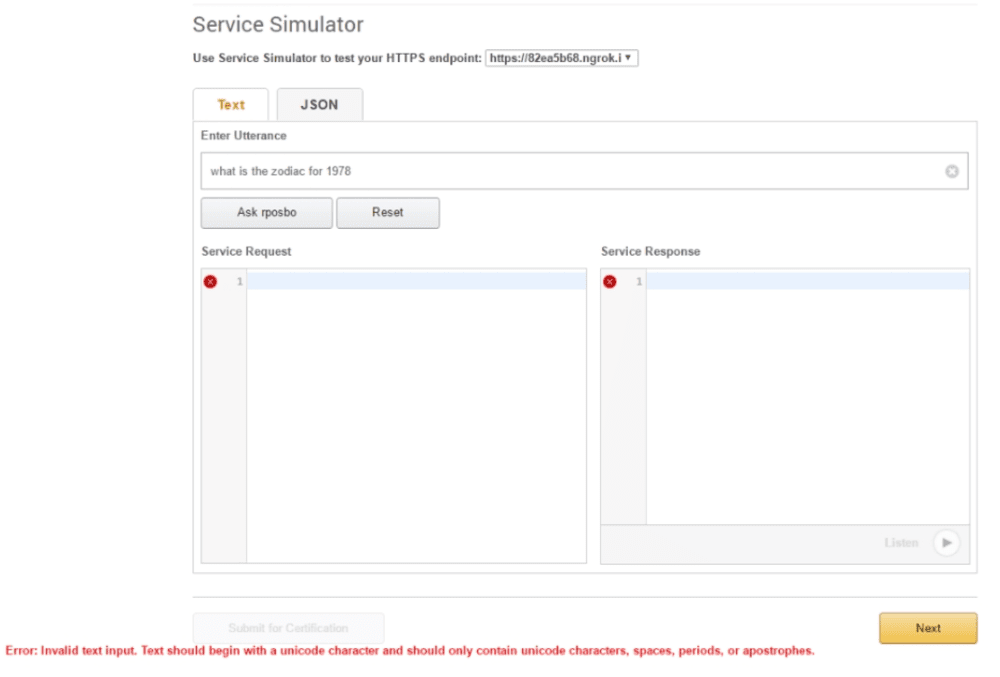

Now let’s enter a test utterance and see what happens. Try “what is the zodiac for 1978”.

Error: invalid text input. Text should begin with a Unicode character and only contain Unicode characters, spaces, periods, and apostrophes

Uh, wut?? What’s wrong with my utterance?..

Now let’s try:

“what is the zodiac for nineteen seventy eight”.

No error. Weird huh? I guess you’re simulating what a user would actually say, and “1978” isn’t explicit in how it would be pronounced; it could be “one nine seven eight”, or “nineteen seventy eight”, or “one thousand nine hundred and seventy eight”.

Top tip: avoid numeric characters in utterances

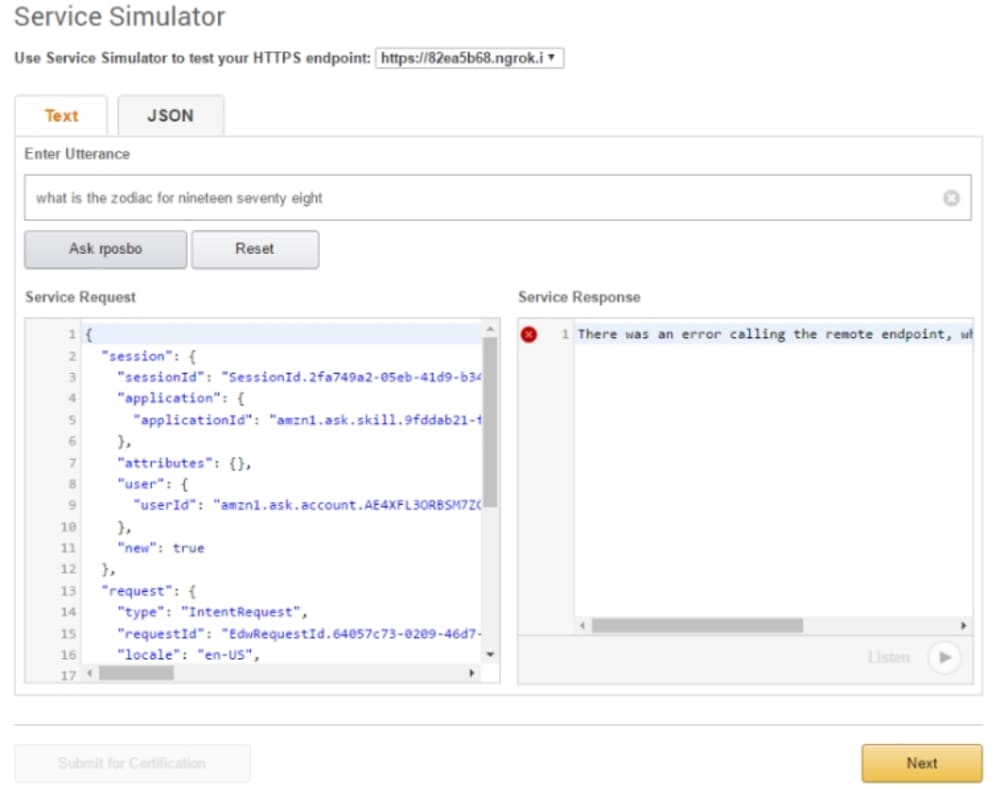

Now that request should go through, but there will not be anything responding on the other end:

Notice the request body in the Service Request box; now you know the structure of the request.

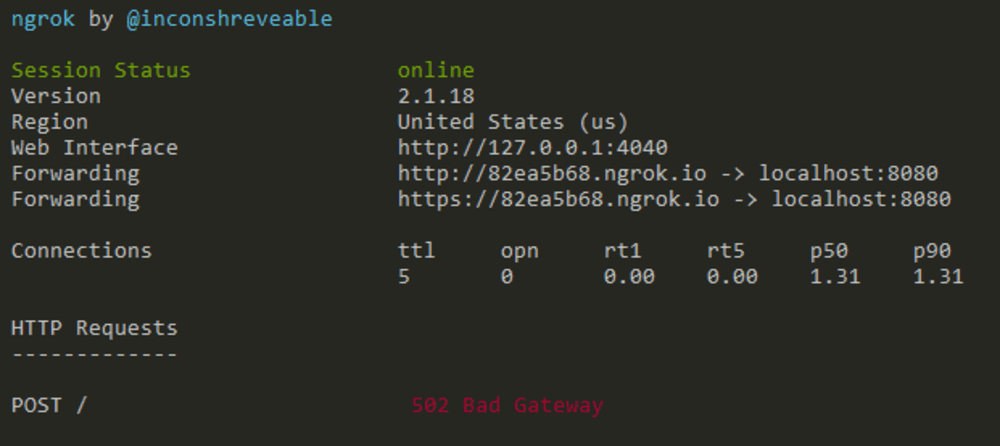

Let’s investigate ngrok; open up the cli and you should see a request was received but have a 502 response.

This means the request actually hit our computer! Woohoo!

Ngrok has a web interface that’s running on port 4040; head over to http://localhost:4040 to get a nice web view of your ngrok request and response.

Now you can check the request headers as well as be able to replay a captured request.

Notice there’s a sessionId in the request body; we can use this in future to keep context in a conversation, like mapping this to a conversationId in botframework.

Canned

Now that you’ve got a request hitting your computer, let’s make a response that Alexa can understand.

To do this I use the fantastic canned; based on an incoming request it can respond with the contents of an existing file based on their naming convention.

For example, a GET request for / with the Accept request header set to text/html would map to an html file called index.get.html.

To install canned you can just use npm:

npm install -g cannedLet’s test this by creating that dummy html file called index.get.html and paste in “Hello World” (or something more original). In that same directory run:

canned -p 8080This maps port 8080 to the current directory. If you open a browser and head to “http://localhost:8080/” you should see your Hello World html page, and your request should be logged in the canned command window.

Now let’s try that again using your ngrok URL: make a request and you should see the same response in your browser as well as the request and response being captured in the ngrok command window and the ngrok web interface.

Wiring it all up

Check the ngrok request and response from Alexa and you’ll see it makes a POST request and it uses application/json; create a json file called index.post.json in the same directory you’re running canned against, and paste in something like this json:

{

"version": "1.0",

"response": {

"outputSpeech": {

"type": "PlainText",

"text": "the Chinese animal for the year 1978, is Horse"

},

"shouldEndSession": true

}

}This is pretty much the minimal response structure for an Alexa skill.

With that file saved try the Service Simulator again; hopefully you’ll see a request hit canned, a request and response through ngrok:

And a response should appear in the Service Simulator.

Echo Testing

If you own an Echo or Echo Dot and you signed in to the Amazon Developer Portal using the same account as your Echo, then you can open the Alexa app – or head to alexa.amazon.com in your browser, tap “Skills”, then “Your Skills”, where you should see your own in-development skill appear!

Enable it, and ask your Echo to use it by using one of the utterances you defined, e.g.

“Alexa ask Zody what the Chinese Zodiac animal is for 1978”

If you get a confused response from Alexa then you may need to check your location. Notice your skill currently has a “devUS” badge on it? Even if you selected “Europe” easy back at the start?

Head back to the developer portal and where it currently says “EN-US” as the language and tap to add a new tab for “EN-GB”… (Face palm).

Duplicate everything from “EN-US” to “EN-GB”, go back to your Alexa app and you should see your skill now has “devUK”!

Try asking Alexa one of your utterances again and you should see it hit canned, ngrok, and give a verbal response from your Echo!

Summary

We just created a dummy Alexa skill giving a canned response from our local computer from a real voice command to our Echo.

Hopefully you can now see how easy it would be to have a webapi endpoint to enable dynamic responses.

In the next article we’ll map Alexa to a botframework chatbot!

Great thanks

Hi Robin,

This tutorial is awesome!! I followed this tutorial and learnt lot. Thank you!

I have one small issue here and hope you don’t mind answering my query.

I created ASP.Net Core Web Application (WebAPI) project. In my controller, I wanted to just analyze what request is coming from Alexa. So I created POST method with dynamic object as a parameter. I could see the request on 127.0.0.1:4040 portal but when the request is hitting to my POST method, the dynamic object is not populating with anything. I tried to pull properties/methods from reflection but all are null. Could you please elaborate what can be wrong? Thank you!

Jay

Hi Robin, Sorry for multiple comments. I converted JSON request that I could see in my ngrok inspect portal to C# class (e.g. AlexaRequest) and tried to provide object of that class as a parameter to my POST method and thought that my Request, Context and so on objects will be populated but seems all these objects are null. So would appreciate if you can help to understand and throw some light on what’s missing. Thank you again.